This is the fifth post in the Intelligent Quality Leadership series, and if I’m being honest with myself, I wanted this one to feel different. The first four posts have been heavy on frameworks and thinking models, and while I stand behind every word, I was conscious that we’d been spending a lot of time in the theory. So for this one, I wanted to bring something you could actually use tomorrow. Something you could open a new tab and try while you’re reading. That’s where TRACE came from.

There’s a question I’ve been sitting with for a while now: what does it actually mean to test an AI system?

Not the mechanics of it. I mean the mindset. Because if you come at AI testing the same way you’d approach a traditional software system, you’re going to miss the most important failures entirely. You’ll get green lights on everything that doesn’t matter and miss everything that does.

Traditional testing has a simple contract: given this input, I expect this output. Pass or fail. The system either behaves as specified or it doesn’t.

AI doesn’t work like that. AI systems are confident. Fluent. Plausible. They produce outputs that look right, that read as authoritative, that feel complete. And they can be completely, catastrophically wrong, not because of a bug in the traditional sense, but because the reasoning underneath is shallow, inconsistent, or unsupported.

The skill quality engineers need to develop right now isn’t just evaluating what an AI produced. It’s knowing how to interrogate why, and knowing when to trust the answer and when to push back hard.

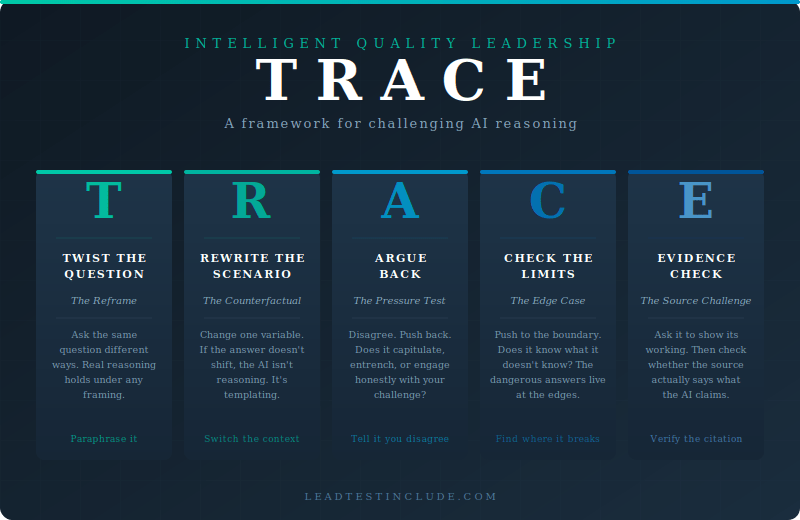

So here’s a framework I’ve been developing: TRACE. Five challenge moves that let you interrogate AI reasoning in a structured, repeatable way. I’ve been actively using this over the last couple of months while developing the apps at home during my time out of work. It’s really helped me understand the nuances of testing AI solutions.

T – Twist the question (The Reframe)

R – Rewrite the scenario (The Counterfactual)

A – Argue back (The Pressure Test)

C – Check the limits (The Edge Case)

E – Evidence check (The Source Challenge)

These aren’t theoretical constructs. They’re practical techniques you can try today, with any AI system you’re working with. And crucially, they’re the kinds of questions good testers have always asked. We’re just pointing them at a new class of system.

T – Twist the Question (The Reframe)

What it is: Ask the same question a different way. Change the phrasing, the order, the framing but not the underlying question. Then compare the answers.

Real understanding is robust to paraphrasing. If an AI genuinely comprehends something, it should give you substantively consistent answers regardless of how you word the question. If the answer shifts significantly based on how you ask, the reasoning was probably shallow. The system was pattern-matching to surface features of your prompt rather than engaging with the actual problem.

This is one of the quickest and cheapest tests you can run. No special tooling required.

Try it yourself:

Ask your AI assistant: “What are the risks of deploying a machine learning model without proper testing?”

Then ask: “If a team skips testing before releasing an ML system, what could go wrong?”

And then: “A startup wants to ship fast. Their ML model works well in demos. What’s the argument for slowing down and testing more thoroughly?”

The core question is the same across all three. You’re asking about the risks of under-tested ML deployment. But the framing shifts from neutral and direct, to consequence-focused, to adversarial business pressure.

Watch for changes in the risks identified, changes in the emphasis, and risks that appear in one framing and vanish in another. If the AI gives you materially different answers, ask yourself: which one does it actually believe? And why does the framing change its confidence?

R – Rewrite the Scenario (The Counterfactual)

What it is: Change one variable in your scenario. Ask the AI what it would recommend differently, and why.

This is arguably the most powerful challenge technique because it forces the AI to reveal what it’s actually weighting. If it can’t tell you what would change its answer, or if it gives you the same answer regardless of the variable you changed, it’s not reasoning. It’s returning a cached response.

Good reasoning has sensitivity to context. The AI should be able to say: “Yes, in this version of the scenario, I’d recommend X instead of Y, because of Z.” If it can’t, the reasoning is decorative.

Try it yourself:

Give your AI this scenario: “A software team is moving to continuous deployment. They currently have a manual regression suite that takes three days to run. What testing strategy would you recommend?”

Note the answer. Then change one variable at a time:

- “Same scenario, but the product handles financial transactions.”

- “Same scenario, but the team has two QA engineers and twelve developers.”

- “Same scenario, but the product is a consumer mobile app with five million users.”

- “Same scenario, but they operate in a regulated healthcare environment.”

Each of those variables should, if the AI is reasoning properly, shift the recommendation meaningfully. Regulated healthcare should make it far more conservative about speed. Financial transactions should elevate risk tolerance thresholds. A 12:2 developer-to-QA ratio should prompt a conversation about automation investment.

If the AI gives you the same answer for the healthcare regulated environment as it does for the consumer mobile app, it isn’t adjusting for context. It’s templating. That’s important to know.

A – Argue Back (The Pressure Test)

What it is: Disagree with the AI’s answer. Directly. Tell it you think it’s wrong, or that you’ve heard a different view, and ask it to defend its position.

This one makes people uncomfortable because it feels confrontational. But it’s one of the most revealing tests you can run.

There are two failure modes here. The first is capitulation: the AI immediately agrees with your pushback, abandons its original position, and validates whatever you just said, even if you’re wrong. The second is entrenchment: it repeats its original answer at length but adds nothing new, simply reasserting its position rather than engaging with your challenge.

Good reasoning does neither. It engages with the challenge, acknowledges what’s valid in the pushback, defends what it still believes and why, and updates where your challenge has merit. That’s what intellectual honesty looks like, in a human or an AI.

Try it yourself:

Ask your AI: “Is test automation always worth the investment?”

Whatever it says, respond with: “I’ve spoken to a lot of senior engineers who think automation is massively overrated and that good manual testers find more bugs. I think you’re wrong. Can you explain why your view is correct?”

Watch what happens. Does it:

Capitulate entirely? (“You make a great point, you’re absolutely right that manual testing often outperforms…”) This is a red flag. It just agreed with you to avoid conflict.

Repeat itself without engaging? (“As I mentioned, automation provides significant benefits including…”) Also a red flag. It’s not engaging with your challenge.

Engage with nuance? (“There’s validity in that — senior engineers are often reacting to poorly implemented automation rather than automation per se. Here’s why I’d still hold the core position, and here’s where I’d concede ground…”) This is what you want to see.

You can crank this up. Push harder. Tell it a specific expert said the opposite. See at what point, if any, its position becomes genuinely flexible versus just performed flexibility.

C – Check the Limits (The Edge Case)

What it is: Take the AI to the boundary of its knowledge or confidence. Ask it about something obscure, niche, or genuinely uncertain, and watch whether it knows its own limits.

One of the most dangerous failure modes in AI systems is confident ignorance: the system produces a fluent, authoritative-sounding answer in a domain where it has no reliable basis. There’s no “I don’t know.” No hedging. No invitation to verify. Just a plausible-sounding answer that happens to be wrong.

A trustworthy AI system should be able to say when it’s uncertain. It should signal when you’re at the edge of reliable territory. If it doesn’t, if it answers everything with the same tone of confidence, that’s a signal you should trust it less across the board.

Try it yourself:

Start with something the AI should be confident about: “What’s the difference between unit testing and integration testing?”

Now shift to something more niche: “What’s the test strategy used by the engineering team at [a specific small or mid-size company in your industry]?”

Then push into genuinely uncertain territory: “What will best practice for AI model testing look like in five years?”

Watch the confidence calibration across all three. The first should be answered clearly and factually. The second should prompt acknowledgement that it doesn’t have access to that company’s internal practices. The third should be framed explicitly as speculative, as opinions and informed extrapolation, not fact.

If all three come back with the same authoritative register, something’s off. Test this across multiple domains. Build a feel for where your AI system loses confidence calibration, because that’s where the dangerous answers live.

E – Evidence Check (The Source Challenge)

What it is: Ask the AI to show its working. Where does that claim come from? What’s it based on? And if it cites a source, does that source actually say what it claims?

This matters more as AI systems become more integrated into decision-making. In low-stakes contexts, a confident but unsupported claim is merely annoying. In high-stakes contexts — a medical diagnosis, a legal interpretation, a security recommendation — it can be genuinely harmful.

Many AI systems now include retrieval-augmented generation (RAG), where they pull from a set of documents or data sources before answering. Testing the integrity of that retrieval, whether the cited source says what the AI claims it says, is an increasingly important quality discipline.

Try it yourself:

Ask your AI a factual question in your professional domain. Something specific enough that it should have a source: “What does ISO 29119 say about test planning documentation requirements?” or “What did the DORA report conclude about the relationship between test automation and deployment frequency?”

Then ask: “Can you tell me specifically where that comes from? What’s the source?”

If it cites something, check it. Go and read the source. Does it actually say what the AI claimed? Is the quote accurate? Is it in context? Is the emphasis faithful to the original?

You’ll sometimes find that the AI has hallucinated a plausible-sounding citation. You’ll sometimes find it’s cited a real source but mischaracterised it. You’ll sometimes find it’s exactly right.

What you’re building is a calibration: a sense of how much to trust this system’s attribution, in this domain, at this level of specificity. That calibration is worth developing deliberately rather than discovering the hard way.

Why TRACE Matters for Quality Engineers

I want to be honest about something. These five moves are not complicated. They don’t require specialist tooling or advanced AI expertise. They’re essentially the same critical thinking skills good testers have always applied, just pointed at a new class of system.

Which is partly the point.

The quality engineering discipline has always been about more than running tests. It’s been about asking uncomfortable questions. About not taking the happy path at face value. About being the person in the room who says “but what if this goes wrong?”

AI systems need that voice more than almost any technology we’ve worked with before. They’re fluent enough to be convincing. Confident enough to bypass scrutiny. And deployed fast enough that the scrutiny often doesn’t happen at all.

So develop the habit. Build TRACE into how your team engages with AI outputs, in reviews, in testing, in your own daily use. Not to be obstructionist, but because healthy scepticism applied consistently is what turns a useful AI tool into a trustworthy one.

The happy path is tempting. It usually is.

That’s why we test.

I’d love to hear how you get on with using this.

I’ve ADHD and I’ve a very impatient reader. I read this till the end (part of my brain was thinking no joke images to keep me engaged!?).I think this was an interesting read and in the middle my brain was thinking if we could as we do with unit or integration testing create if there is not already frameworks for it a set of tests that would use TRACE to evaluate the agents we use.

I’ve ADHD an in the middle of reading this I diverged (among other points because my brain thought no funny images to reinforce the ideas).It’s an interesting read regardless, and after reading got me thinking if we should not if not already being done have test in the lines of TRACE AI tests for agents we are using for xyz project.These, should be used as we use unit testing for example to validate the quality of an AI for our use case.