This is the post where two things I’ve been building separately finally meet.

When I started the Intelligent Quality Leadership series earlier this year, I had a model I wanted to stress-test and a set of ideas I needed to get out of my head and onto a page. What I didn’t expect was that writing it would force me to reckon with something important.

I’ve been building towards quality leadership my entire career. The AI dimension is more recent and is applied, hands-on, and still evolving. What this series has done is show me where those two things intersect. And that intersection is where the real work is.

This post is where that intersection lands.

Where This Started

I’ve created Quality Engineering principles twice before, at two very different companies.

The first time was at easyJet, built in collaboration with our QE Architect at the time, Suman Bala. Bold, energetic, built for scale. “Quality as a Culture” sat at the centre of everything, surrounded by principles designed to rally a 120-person distributed organisation around a shared belief. The language was deliberate, “Automate ALL the RIGHT things”, “Testability as a foundation”, because at that size, you need something people can remember, repeat, and actually live by.

The second time was at Goodnotes. The bones were the same but the expression was different. More refined, more embedded in product thinking. “Sustainable Automation” instead of “automate the right things.” “Collaborative Quality Ownership” instead of a rallying cry. The principles had grown up with me.

Both sets still hold up. But neither of them accounts for what AI has done to our discipline. And that’s what this is.

Why a Manifesto

I spent a long time thinking about what to call this. Principles felt too passive. Framework felt too corporate. Pillars, charter, ethos, all considered and I chose to set them aside.

I landed on Manifesto because it’s the right word for what this is. A manifesto isn’t a description of how things are. It’s a stake in the ground about how things should be. It invites adoption, challenge, and conversation. And that’s exactly what I want this to do.

These aren’t seven things I’ve observed in good teams. They’re seven commitments I believe quality leaders need to make and keep making, if they’re serious about leading in an AI-first engineering world.

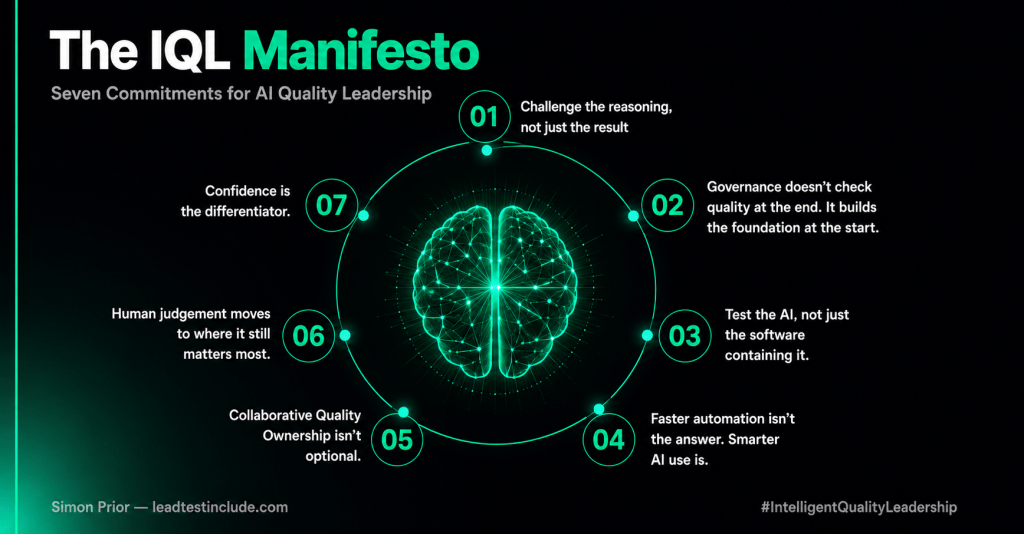

The IQL Manifesto

01 – Challenge the reasoning, not just the result

AI outputs can look right and be wrong. Quality leaders build cultures that interrogate why, not just what.

This is the mindset shift that everything else depends on. Traditional testing has a simple contract: given this input, I expect this output. AI breaks that contract. It’s confident, fluent, and plausible. It can be completely wrong without looking like it.

The skill quality leaders need to build, in themselves and their teams, isn’t just evaluating what an AI produced. It’s knowing how to interrogate why, and when to trust the answer versus when to push back hard. TRACE, the framework I developed in IQL Practical #1, is one way to do this. But the principle is bigger than any framework. It’s a habit of mind.

02 – Governance doesn’t check quality at the end. It builds the foundation at the start.

The earliest conversations about an AI feature are where the quality bar is set, whether you’re in the room or not.

There’s a version of AI governance that lives in slide decks and quarterly board updates. It’s tidy, well-presented, and almost completely disconnected from the moments that actually matter.

Real governance happens on a Tuesday morning, ten weeks before release, when a product manager sends a calendar invite for an AI feature scoping kickoff. The quality leader who understands this isn’t waiting for a test plan to think about risk. They’re in that room, asking the questions nobody else thought to ask yet. If you’re not there, the quality bar gets set without you. And it’s very hard to raise it later.

03 – Test the AI, not just the software containing it.

The failures that matter most in AI systems don’t look like bugs. They look like drift, bias, inconsistency, and cost you didn’t see coming.

Most teams are running their existing playbook against a fundamentally different kind of system. They test the integration layer. They check the UI. Someone runs a few prompts manually and confirms the responses look reasonable. The feature ships.

Three months later, the model gets updated. Outputs subtly change. A user in a different region gets a culturally inappropriate response. The AI starts hallucinating a statistic that sounds authoritative but is completely fabricated. None of it gets caught because none of it was being tested for.

Testing AI means covering five distinct dimensions: reliability across runs, behavioural consistency, safety at the edges, explainability, and cost you can sustain. If your AI testing strategy is your existing test strategy with an AI component bolted on, you don’t have an AI testing strategy. You have a gap you haven’t found yet.

04 – Faster automation isn’t the answer. Smarter AI use is.

AI can generate a thousand tests in minutes. The hard question is whether any of them are asking the right thing.

The rush to use AI for automation is creating a lot of volume and very little value. Teams are generating tests at scale without asking whether they’re generating the right tests. Speed becomes the metric when quality should be.

The risk isn’t that teams automate too slowly. It’s that they automate thoughtlessly and call it transformation. A quality leader’s job is to make sure AI amplifies good judgement rather than replacing the need for it. That’s a harder thing to lead than it sounds, because the volume looks impressive and the dashboard looks green.

05 – Collaborative Quality Ownership isn’t optional.

In an AI-first team, real ownership has to be deliberate, visible, and led.

AI has created a democratisation illusion around quality. The optimistic narrative goes: AI puts testing capability in everyone’s hands, so shared ownership should be easier to achieve than ever. I’d ask you to look more carefully at what’s actually happening in your teams.

When a developer generates a test suite using an AI tool, they’ve accessed a quality capability. They haven’t necessarily taken ownership of quality. Ownership means understanding what you’re testing and why, caring about what the output tells you, and being willing to have the difficult conversation when the picture isn’t clear. Generating a test file and merging it doesn’t require any of that.

The risk isn’t that AI keeps people away from quality. It’s that AI gives people the feeling of participating in quality without the responsibility that real participation requires. In an AI-first team, collaborative ownership doesn’t emerge naturally. It has to be designed in, made visible, and actively led.

06 – Human judgement moves to where it still matters most.

The decisions AI can’t make, the risks it can’t see, and the moments where someone needs to say no.

The human in the loop isn’t going anywhere. They’re just moving up.

As AI takes on more of the execution, writing tests, analysing results, flagging anomalies, it means the human role shifts. Not away from quality, but towards the parts of quality that require something AI genuinely can’t provide: contextual judgement, ethical reasoning, and the willingness to say “I don’t think we should ship this” when the data is inconclusive but the instinct is clear.

That’s not a limitation of AI maturity. It’s a deliberate quality decision. The teams that understand this will build better products. The ones that don’t will find out the hard way.

07 – Confidence is the differentiator.

In AI-first products, quality isn’t a checkbox. It’s what users feel when the system doesn’t let them down.

In a world where AI capabilities are increasingly commoditised, the product that wins isn’t necessarily the most powerful one. It’s the one users trust. The one that behaves consistently. The one that doesn’t surprise them in ways that erode confidence.

Quality engineering in an AI-first world is ultimately about building that confidence, not as a feature or as a compliance requirement, but as a competitive advantage. That’s what users feel when the system doesn’t let them down. And that feeling is what keeps them coming back.

What I Want You to Do With This

I didn’t write this to be read once and filed away. I wrote it to be used.

If something here resonates, take it into your next team conversation. Use it as a lens for where your quality practice has gaps. Challenge the parts you disagree with! I mean that genuinely, because the best frameworks get sharper through friction, not consensus.

If your organisation is figuring out what AI-first quality engineering actually looks like in practice, I’d love to talk. These commitments are a starting point, not a finished answer.

And if this feels like the beginning of something bigger… well. It might be.

The IQL Manifesto is part of the Intelligent Quality Leadership series on leadtestinclude.com. If you’re new to the series, start at the beginning.

Want to talk about any of this? Book time with me at calendly.com/simon-leadtestinclude/30min or find me on LinkedIn.